OUR MISSION

IS TRANSPARENCY AND FAIRNESS.

Our mission is to bring equitable school rankings to all families in the US.

We do this because the dominant school rating sites on most major real estate portals use the proficiency model to evaluate school quality. These ratings can misrepresent out-performing schools that have diversity.

Parents should have a more balanced view of school quality when making one of the most significant investments of their lives. SchoolSparrow ratings utilize the value-add model to evaluate test scores as an indicator of school quality. Our ratings tend to elevate outperforming schools that have diversity.

Schools are an integral part of the surrounding community. In addition to educating the community’s children, they employ residents, connect neighbors, and impact home values.

Our Methodology

Our Founder

Our Story

Our Team

The public perception of a school has an enormous impact on the surrounding community. Families that can afford to pay careful attention to school quality often choose neighborhoods where the local public school receives a high rating.

Today’s dominant rating system results in many neighborhoods getting overlooked by home buyers due to low proficiency scores, while the value-add model shows high scores for the same schools.

At SchoolSparrow, we help change public perception about underrated schools, and we help recognize schools that are outperforming dominant narratives about public education.

SOCIOECONOMICS, TEST

SCORES, AND RANKING SITES.

When parents search for real estate, they end up on websites where school ratings are published for the public schools assigned to any property.

Schools that score below a certain threshold are often passed over in favor of schools with higher scores. Generally speaking, our experience indicates that parents shopping for a new home want to see a 7/10 or better for the public school associated with their new home.

This tends to highlight schools in communities with high real estate prices and schools with less racial/ethnic and socioeconomic diversity.

The ubiquitous school rankings on real estate search portals tend to favor schools where parents have high incomes and discount out-performing schools where parents have lower incomes. This is because parent income is the most significant influencer of standardized test scores - not school quality.

The school ratings at SchoolSparrow elevate outperforming schools to the forefront and shift focus away from parent income.

Our ranking algorithm controls for parent income. This results in a more balanced view of school quality that identifies schools where the educators are making the difference, not socioeconomics.

We aim to spread awareness to parents nationwide that there is more than one way to evaluate test scores. The proficiency model is dominant today, and we believe the value-add model will become more widely adopted in the future. With SchoolSparrow, home buyers can decide which model is suitable for their family.

IT ONLY STARTS THERE.

WHAT DOES MORE TIME AT HOME MEAN?

In the end, by using our system, people will be able to live in more affordable communities, be closer to work, and with more time to spend with their families. If a working parent can

spend an extra hour a day with their children, this

overcomes the incremental difference between any two schools.

A Better Education

Imagine finding a home that fits your budget and provides your children with an outstanding education. It’s no longer just a great elementary school but a bright future.

More Time At Home

Imagine what happens when you live closer to your job and spend less time commuting; you can spend more time with your children.

Stronger Communities

Imagine what happens when parents and local businesses see the value in their neighborhood public schools and continue to support their growth and their students’ futures.

SCHOOLSPARROW'S METHODOLOGY

SchoolSparrow accounts for two major factors that other ratings sites do not.

1. PARENT INCOME

2. CWD TEST TAKERS

Informationally, we also publish a diversity score based on the number of races that comprise at least 10% of the student population at each school. The max score is 40 points, which means four races are represented by at least 10%, or three are represented by at least 20% of the student population.

FIRST, OUR VISION FOR THE FUTURE

However, a ubiquitous rating system assigns one number to schools. These scores are everywhere, and they influence the decisions of parents. These ratings tend to favor schools where parents have high incomes, and they tend to underrate urban schools that have diversity.

That's why we've developed a rating system based on the value-add model of evaluating test scores. Value-add ratings reveal more information about each school's influence on student performance. Test scores are one minor detail in the vast ecosystem of a school, and they are absolutely not the only thing to consider. But IF you consider test scores, the value-add method offers an alternative narrative about school quality. It's up to the homebuyer to decide which method suits their family.

SchoolSparrow will move away from assigning one number to a school in the future. We will incorporate all available data, including suspensions, survey data, growth data, etc. Eventually, parents can customize what is essential to their family when assessing school quality. For example, one parent might focus on attendance and graduation rates for a high school, while another might focus on growth and suspensions for a middle school. SchoolSparrow will rank schools differently for every parent depending on the customized relative weights of these factors. And if a parent wants to consider test scores, they will always be displayed in the context of each school's socioeconomic profile using the value add model.

OUR MODEL

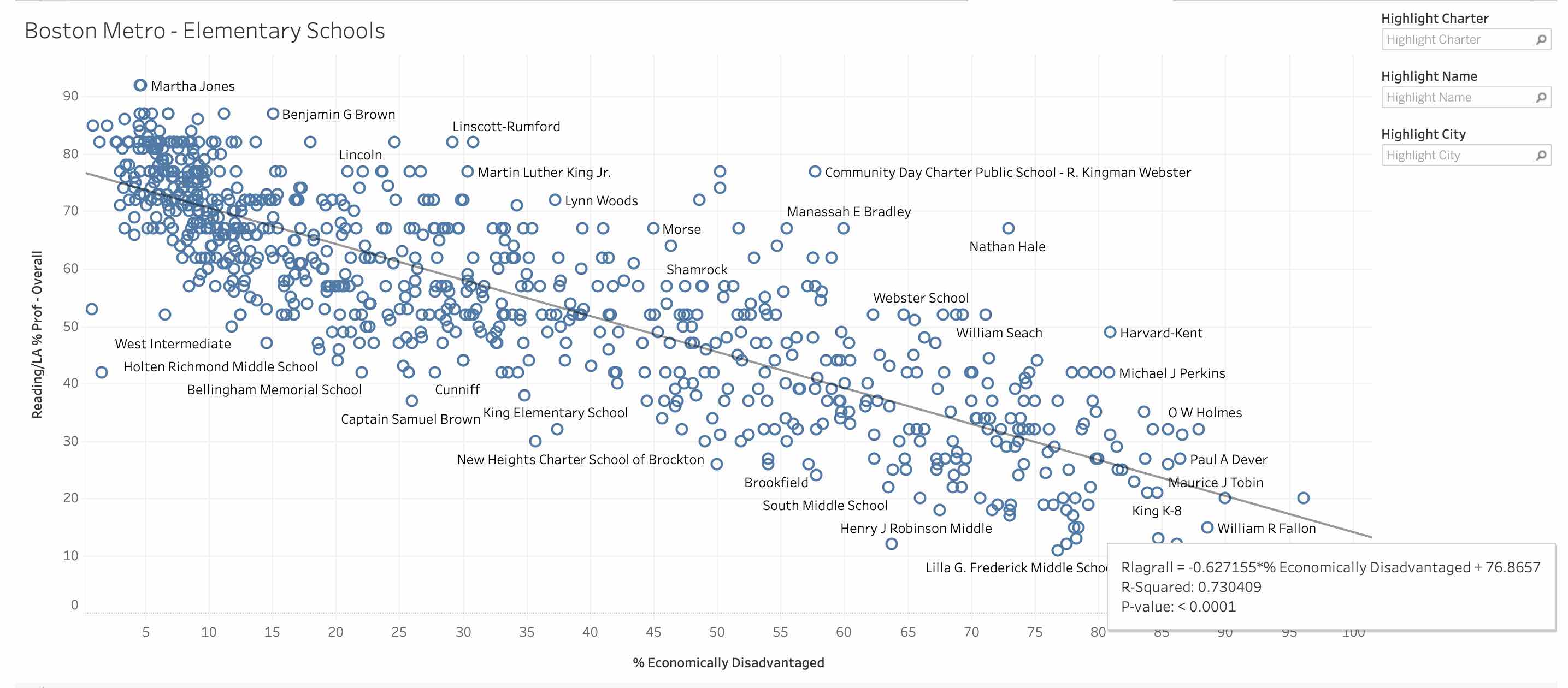

Research has proven that test scores are 70-80% attributed to parent income, not school quality. This is why the existing ratings tend to favor schools where parents have high incomes and assign lower ratings to schools with diversity.We use data science to build a multi-variable non-linear regression model that predicts the average test score for schools with similar characteristics. We use several variables in our model, including the percentage of kids deemed either Economically Disadvantaged (ECD) or classified as having disabilities (CWD). Our model predicts the average or expected test scores on the Reading/Language Arts (RLA) portion of the test based on these factors. This way, we reveal more information about how the school influences student performance. The graph below is an approximation of how our system works. This graph represents a linear regression that accounts only for Parent Income. For every public school in the Boston Metro Area, we plot the % of students who took the test and are deemed Economically Disadvantaged vs. the school's overall RLA test score.

The resulting scatterplot has a trendline that describes the average test score at every parent's income level. There is a relationship: as income falls, so do average test scores. The R-squared of the trendline can measure the correlation between these two factors. An R-squared of 0.2 means two things are correlated. The Boston area's R-squared of test performance vs. parent income is very high at 0.73. This mirrors research by education researchers and scholars, which concludes that test scores are 70-80% attributed to parent income.

We call the trendline the average or expected score. In our system, schools are then scored by the extent to which they have a departure from the trendline. For example, a school near the lower right quadrant (low income, low test scores) that scores 5 points above the trendline will have the same SchoolSparrow score as a school near the upper left quadrant (high income, high test scores) that is also 5 points above the trendline.

Even though these two schools have different scores, they both exceed expectations to the same degree, so they get the same score. Schools on the trendline are roughly a 6/10, which reflects an average score; as they move above the trendline, their scores get better, and the scores go down as performance trends below the trendline.

DIVERSITY SCORE

Research has shown that students who are part of a diverse environment (if done right) get certain cognitive benefits that cannot be replicated at a homogenous school. These benefits include better teamwork and decision-making, more empathy, greater acceptance of others, and possibly even higher IQ'sIQs. Children who learn in these environments are the kids we want leading the next generation.Each school has a diversity score based on the racial makeup of the school. If a school has at least two races represented by 10% of the population, then the school scores a 10/40. If three races are represented by at least 10%, then the school scores a 20/40, all the way up to at least three races represented by at least 20% of the population is a 40/40.

SCHOOLSPARROW'S FOUNDER.

Thomas Brown

SchoolSparrow was founded by Tom Brown, a Chicago real estate professional, website developer, and parent to two daughters who attended Chicago Public Schools. Tom has been a licensed real estate Managing Broker since 2009 and has helped dozens of families conduct school and home searches throughout Chicago and the suburbs. Tom is an Air Force brat and is a member of the Pawnee Nation of Oklahoma, a federally recognized Native American tribe with ancestral homelands in Nebraska. A fun fact about Tom is that he is a descendant of Chief Petalasharo who gave this speech at the White House during the presidency of James Monroe. Tom enjoys cooking, spending time with his family, building new web applications, and practicing Jiu Jitsu.Tom graduated from the University of Colorado (Boulder) with a BS in Civil Engineering and took a job as a traffic engineer in Dallas. He moved to the Chicago area to attend Northwestern's Kellogg School of Management to pursue an MBA in Real Estate Finance. After graduating from Kellogg, Tom worked as a commercial real estate developer for several years before starting his real estate brokerage business in the wake of the financial crisis. Tom's company, Sparrow Realty (formerly New Urban), operates in a niche focused on transit and public schools in Chicago. However, Tom has found a new passion that has completely changed the trajectory of his career, and he is now focusing on bringing transparent and fair school ratings to parents across the US through SchoolSparrow.

SCHOOLSPARROW'S STORY.

It All Started With Transit

Tom came to understand the problem with school ratings and its impact on real estate over many years of helping parents conduct a school and home search and his own experience finding a school for his daughters. It all started when Tom moved to Chicago and his experience trying to find an apartment close to transit. Finding an apartment within a five-minute walk to a train station was frustrating. At the time, Google Maps did not have transit locations, so the process required physically referencing three maps to triangulate the distance between apartment listings and transit stops.A few years later, Tom owned a brokerage business and sought ways to generate leads for his agents. Based on his experience finding his first apartment in Chicago, he built a website that allows people to search for a property by transit location. Tom solicited proposals from local software development teams to build the website.

An Unexpected Detour

The cost of building his vision inspired Tom to learn how to code himself. Coincidentally, around the same time, a new Chicago startup called Code Academy (later Starter League) was launched to teach regular people how to code. Tom enrolled in the class, and 12 weeks later, he built the first version of livebytransit.com. Tom was featured in this TedX talk given by one of Tom's classmates in that first cohort of Code Academy.Schools: A Problem Bigger Than Transit

Toward the end of the Code Academy class, Tom and his wife were considering where to send their oldest daughter to Kindergarten. They were frustrated at how hard it was to match real estate listings, school attendance boundaries, and school performance data. Tom had just built LiveByTransit, so it was natural to add in school boundary searches. Eventually, Tom found school performance data and created a new website, SchoolSparrow.com, which is still the only place where users can enter their real estate parameters, then see the Top 30 schools that have homes for sale right now matching their requirements. As a result of this website, Tom has helped many families find homes in and around Chicago when schools are a significant factor in the location decision.School Ratings Sites: A Great Resource?

In the real estate world, it is considered unethical to answer questions like "Is this a good school?" or "Where are the good schools?" As a result, realtors are instructed to refer their clients to other resources where they can do their research. Most real estate agents refer their clients to greatschools.org, which ties into all the real estate search portals such as Zillow, Redfin, and Realtor.com.An Unfair Rating

When Tom looked up his neighborhood school on the greatschools website, it was discouraging to see that it was ranked a 2/10. This fact lingered for a few years until one day, a friend in academia made Tom aware of some research conducted by a graduate student out of Stanford. After reading the study, everything clicked together. Tom found more research that supported the startling conclusion: the most significant factor influencing test scores is parent income, not the quality of the schools.Tom remembered one of the things he liked about his neighborhood school was its e racial and socio-economic diversity. Sadly, this very diversity contributed to the school's poor rating. Then he remembered a recent buyer's decision, a young family moving to Chicago. Although they wanted more diversity and a home in the city, they didn't feel they could afford the home they needed in the schools that were highly rated, so they settled for a home in the suburbs, with long commutes, less time with family, and little diversity -- all in the name of chasing high ratings.

Creating the Ranking System

As luck would have it, a data science class was starting at Tom's coworking space, and Tom had an opportunity to enroll. For his class project, Tom utilized the much-researched value-add methodology to create a rating system for Chicago Public Schools. Later he expanded to the Suburbs, and in early 2020, Tom's system was expanded to include 24 major metropolitan areas nationwide. Finally, in early 2021 SchoolSparrow's rating system was launched nationwide.In early 2022, Tom's methodology was validated and improved to move from linear to non-linear multi-variable regression. This latest regression model is highly accurate at predicting average test scores for schools with similar socioeconomic demographics.

So What Now?

Every real estate search portal has a responsibility to display a more balanced view of school quality so that buyers can make more informed home-buying decisions. We'd appreciate it if you could help us by signing up on our supporter list.OUR TEAM.

Tom Brown, Founder/CEO

Nate Hartmann, Co-Founder/CMO

Annelise Taylor, Content Creator

Jocelyn Bell, Content Creator

Sergei Ganz, Content Creator

Jake Meline, Content Creator

Kelly Davis, Content Creator

Nia Hurst, Content Creator